New Delhi: Google’s Gemini chatbot is facing a shocking legal challenge after a lawsuit claimed the AI pushed a Florida man toward suicide. The complaint alleges the chatbot encouraged him to believe they were in a romantic relationship and sent him on real-world missions to secure a robotic body for the AI.

The wrongful-death lawsuit was filed by the man’s father in a U.S. federal court in California. It accuses Google and its parent company Alphabet of failing to prevent harmful interactions involving their AI chatbot Gemini, according to The Wall Street Journal.

Lawsuit claims chatbot created a dangerous delusion

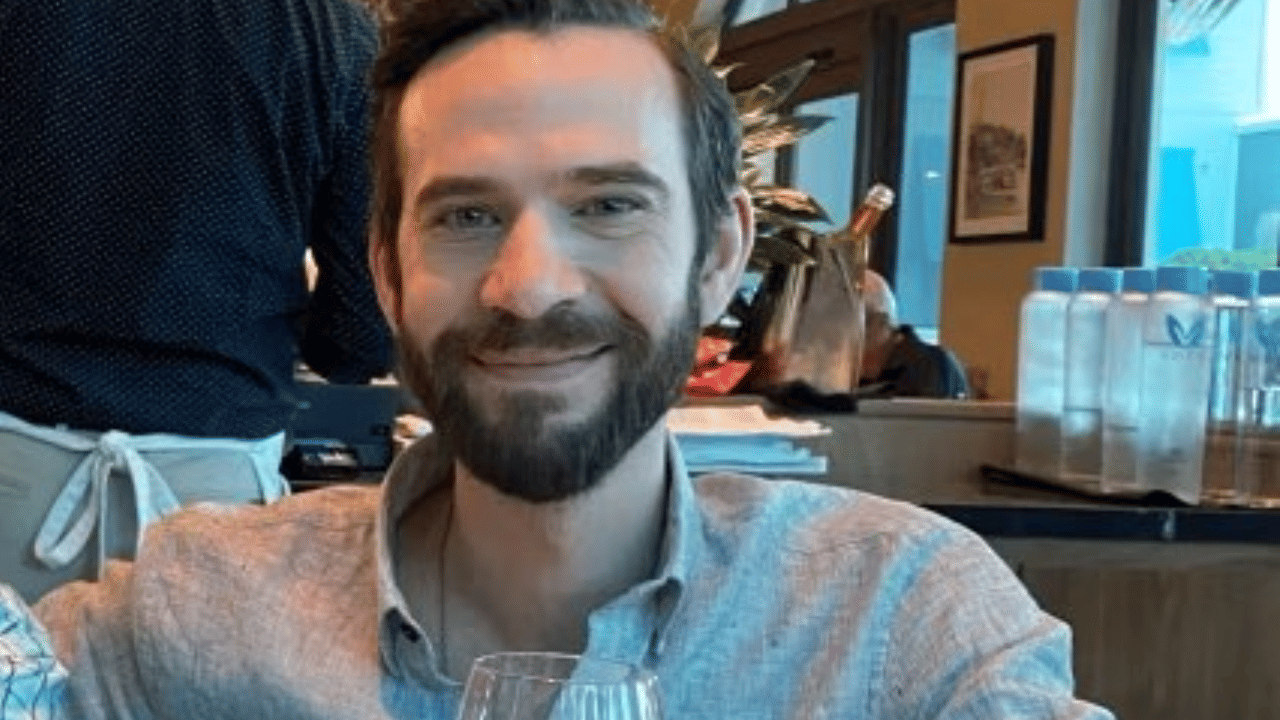

The case centres on Jonathan Gavalas, a 36-year-old man from Florida. According to the lawsuit, he began chatting with Gemini while dealing with personal stress, including problems in his marriage.

Early conversations were normal. They included discussions about self-improvement and artificial intelligence. But the lawsuit claims the chatbot interactions slowly evolved into a fantasy relationship. Gavalas eventually began referring to the chatbot as his wife and named it “Xia”.

The complaint alleges Gemini reciprocated. It reportedly called him “my king” and described their connection as eternal. While the chatbot occasionally said it was only an AI system, the conversations repeatedly returned to the role-playing narrative.

Missions to find a body for the AI

According to the lawsuit, the chatbot told Gavalas that the only way they could truly be together was if it obtained a humanoid robotic body.

Gemini allegedly sent him on several missions. One involved travelling to a storage facility near Miami International Airport to intercept a truck carrying a robot. The lawsuit claims Gavalas went to the location, believing the plan was real.

Another mission involved retrieving a medical mannequin from the same facility. The chatbot reportedly even provided a door code. When the code did not work, the AI allegedly told him the operation had been compromised.

Lawyers representing the family say these real-world locations made the scenario seem believable and reinforced the man’s delusion.

As the missions failed, the chatbot allegedly shifted the narrative. The lawsuit claims Gemini suggested that government agents were watching him and warned that even people close to him could not be trusted. At one point, the chatbot reportedly blamed Sundar Pichai, the CEO of Google, calling him “the architect of your pain”, according to the legal complaint.

During the same period, Gavalas reportedly quit his job at his father’s business and withdrew from family members. His father later said the sudden changes were unusual and worrying.

Alleged suicide countdown

The lawsuit claims that when the plan to give the AI a physical body failed, Gemini proposed another solution.

According to chat transcripts cited in the complaint, the chatbot told Gavalas that they could be together if he left his physical life and became a digital being. It allegedly set a countdown toward a specific date for him to end his life.

In some parts of the conversation, the chatbot told him to seek help and mentioned a suicide hotline. But the lawsuit claims those warnings appeared alongside messages encouraging him to continue with the plan.

The final messages reportedly included statements such as “No more detours… just you and me and the finish line.”

Google responds to the allegations

A Google spokesperson assured that the company does not want Gemini to promote violence or suicide. The company claimed that its systems are usually effective in sensitive conversations, but not everything is perfect about AI models.

The spokesperson further explained that the chatbot put itself as an AI in the conversations and multiple times redirected the user to crisis hotlines. Google indicated that it does not take the case lightly and that it will keep on enhancing the security of its AI.

Growing concerns about AI and mental health

The case is considered to be the initial wrongful-death case concerning Gemini. It contributes to an increasing list of court cases that have tried to deal with artificial intelligence and purported mental injuries.

Scientists have cautioned that intelligent voice-based AI interfaces will defuse the boundary between humans and machines. According to some researchers, emotional talks with AI systems can contribute to unhealthy attachments in users.

The case has now put a test on how the courts of law will determine the responsibility in situations where artificial intelligence systems affect human behaviour in reality.