New Delhi: OpenAI has launched two new lightweight AI models, including GPT-5.4 mini and GPT-5.4 nano, which are designed to support developers and businesses with high volumes of latency-sensitive tasks. These models put into practice a lot of the capabilities of GPT-5.4 in more cost-efficient and rapid forms, which makes them ideal to be applied to real-time applications where speed directly influences the user experience.

The introduction is a sign of an increasing change in AI implementation approaches. Companies are shifting to more and more powerful models with smaller and faster ones as opposed to using large and resource-heavy models. GPT-5.4 mini and nano are created to address this gap, allowing scalable systems with a trade-off between performance, cost, and responsiveness.

What are GPT-5.4 Mini and Nano?

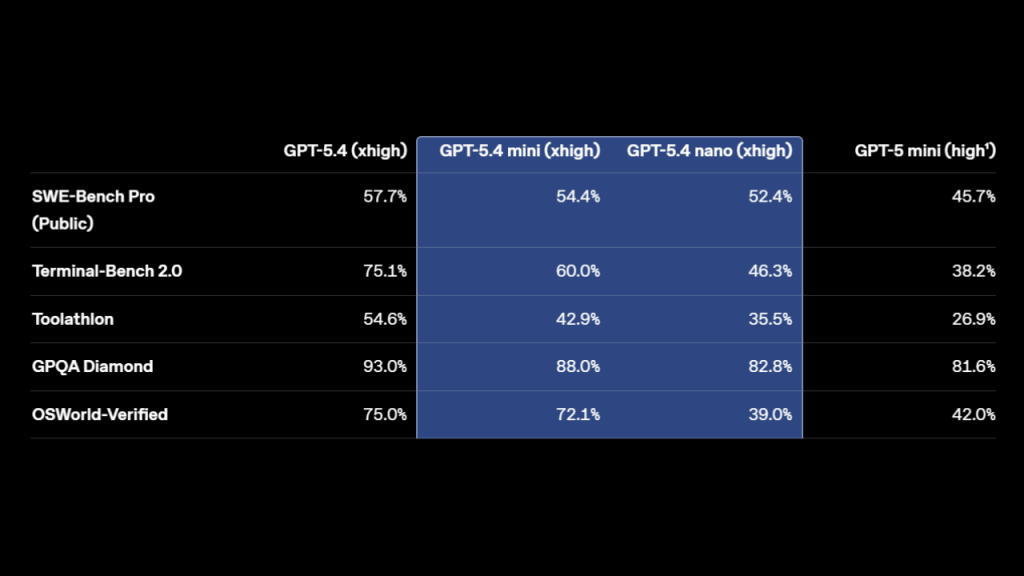

The highest reasoning effort available for GPT‑5 mini is ‘high’.

GPT-5.4 Mini

- Over 2× faster than GPT-5 Mini.

- Strong improvements in coding, reasoning, and multimodal tasks.

- Nears GPT-5.4 performance on benchmarks like SWE-Bench Pro.

- Ideal for coding assistants, debugging, and real-time apps.

GPT-5.4 Nano

- Smallest and cheapest GPT-5.4 model

- Built for simple, high-speed tasks

Best suited for:

- Classification

- Data extraction

- Ranking

- Lightweight coding tasks

Key Capabilities

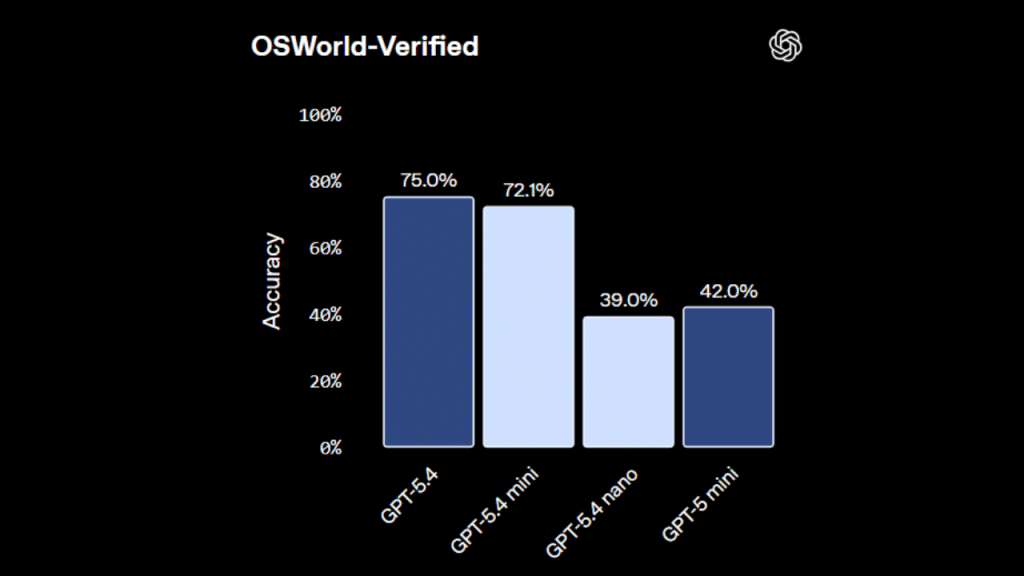

OSWorld-Verified

Coding Performance

Handles:

- Targeted code edits

- Codebase navigation

- Front-end generation

- Debugging loops

Delivers strong performance-to-speed ratio

Subagent Workflows

- Works in multi-model systems

- Larger models handle planning

- Mini executes parallel subtasks

- Improves scalability and efficiency

Computer Use & Multimodal Tasks

- Interprets UI screenshots quickly.

- Performs real-time image reasoning.

- Strong results on OSWorld-Verified benchmark.

Performance snapshot

| Benchmark | GPT-5.4 | GPT-5.4 Mini | GPT-5.4 Nano | GPT-5 Mini |

| SWE-Bench Pro | 57.70% | 54.40% | 52.40% | 45.70% |

| Terminal-Bench | 75.10% | 60.00% | 46.30% | 38.20% |

| Toolathlon | 54.60% | 42.90% | 35.50% | 26.90% |

| GPQA Diamond | 93.00% | 88.00% | 82.80% | 81.60% |

| OSWorld-Verified | 75.00% | 72.10% | 39.00% | 42.00% |

Insight: GPT-5.4 Mini delivers near-flagship performance at significantly lower latency and cost.

Pricing and availability

GPT-5.4 Mini

Platforms: API, Codex, ChatGPT

Price:

- $0.75 / 1M input tokens

- $4.50 / 1M output tokens

Features:

- Text & image input

- Tool use & function calling

- Web and file search

- Computer use

GPT-5.4 Nano

Platform: API only

Price:

- $0.20 / 1M input tokens

- $1.25 / 1M output tokens

Focus: low-cost, high-speed execution

Why this matters

- Quick models enhance users’ experience in real-time applications.

- Reduced costs allow the scaling of AI to products.

- Supports multi-agent architecture.

- Trends are moving away from bigger is better towards faster and efficient wins.

The big picture

OpenAI is making a second move into practical AI deployment with GPT-5.4 mini and nano. These models are not merely smaller; they are designed to be used in greater real-world situations since speed, cost, and responsiveness count the most. To developers creating coding tools, automation systems, or multimodal apps, the release is a clear indication of the future of AI: the future is not only powerful but also fast, efficient, and scalable.