New Delhi: Anthropic has taken the US government to court in a dispute that could shape how artificial intelligence is used by the military. Dario Amodei’s company filed a lawsuit after the Pentagon placed the company on a national security supply chain risk list, a move that could limit the use of its Claude AI system across government operations.

The conflict started after the Pentagon raised concerns about restrictions Anthropic placed on how its AI technology can be used. The company had refused to remove guardrails that block the use of its models for autonomous weapons or domestic surveillance. Now the dispute has moved to federal court, with the outcome likely to influence how governments and AI companies negotiate the rules around military technology.

Anthropic challenges Pentagon blacklist in court

As per Reuters, Anthropic filed its lawsuit in a federal court in California, asking a judge to overturn the Pentagon’s designation. The company said the action violated its constitutional rights.

In the filing, Anthropic argued that the government had overstepped its authority.

“These actions are unprecedented and unlawful. The Constitution does not allow the government to wield its enormous power to punish a company for its protected speech,” the company said in its lawsuit.

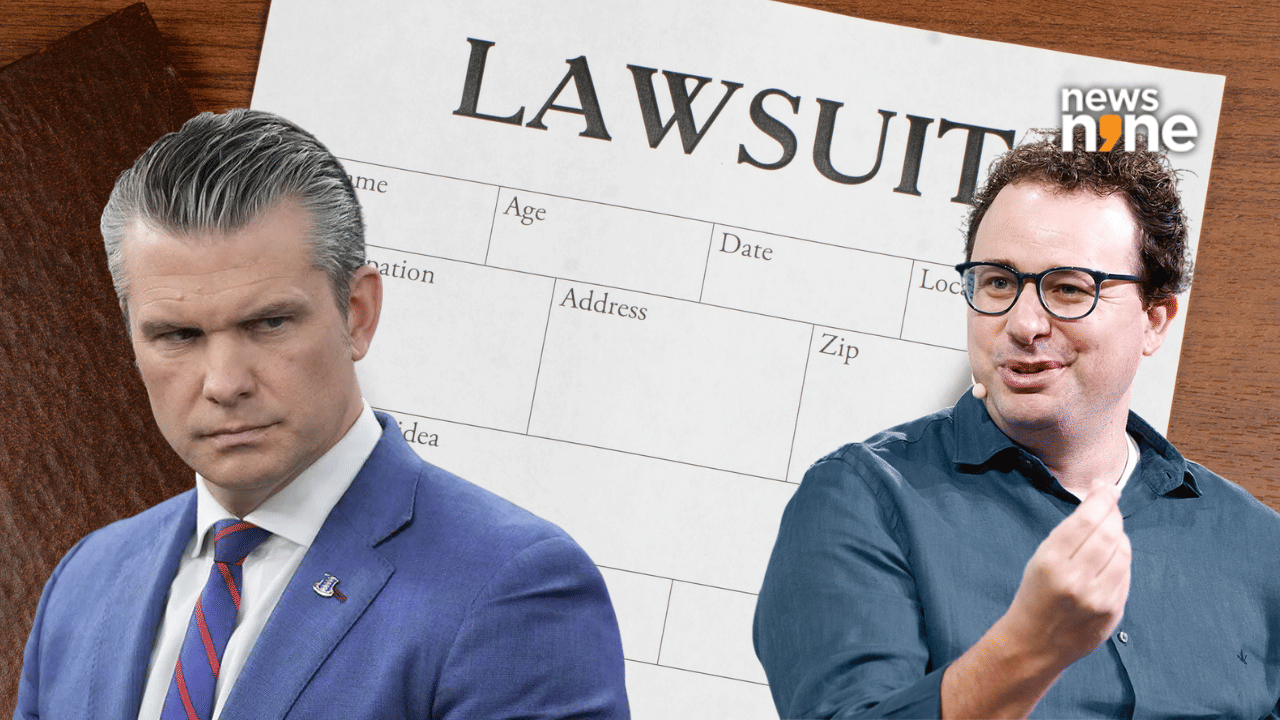

The Pentagon made the designation after months of tense talks with the AI firm. Defence Secretary Pete Hegseth placed the supply chain risk label on Anthropic after the company refused to loosen restrictions around how its AI could be used in military operations.

Why the Pentagon moved against the AI firm

Officials in Washington argue that national defence policy should not be limited by private companies. According to the report, the Pentagon insisted that US law must determine how AI tools can be used for security operations.

The dispute has focused on two key areas where Anthropic placed restrictions:

- Fully autonomous AI weapons

- Domestic surveillance of US citizens

Anthropic has said current AI systems are not reliable enough for autonomous weapons. The company believes such use could be dangerous.

Business impact could be significant

The government designation could have a serious financial impact on Anthropic. Company finance chief Krishna Rao warned that the restrictions could cut hundreds of millions or even billions of dollars from revenue projections for 2026.

Analysts say the dispute may make some corporate customers cautious. Wedbush analyst Dan Ives said the situation could affect enterprise adoption of Claude AI in the near term.

“This could have a ripple impact for Anthropic and Claude potentially on the enterprise front over the coming months as some enterprises could go pencils down on Claude deployments while this all gets settled in the courts.”

AI policy battle reaches a turning point

The legal fight goes beyond one company. Industry experts see the case as an early test of how much control governments will have over powerful AI systems.

Anthropic had earlier worked closely with US national security agencies and even pursued defense partnerships. The Pentagon has signed AI contracts worth up to $200 million each with several AI labs including Anthropic, OpenAI and Google in the past year.

The court case will now decide whether Washington can restrict an AI company for refusing to remove safety limits from its technology. The decision may influence how AI companies handle government contracts in the future.